Defining what “enterprise grade” means in any industry is difficult because many vendors in the industry define the category to flatter their own product. It gets thrown around in pitch decks and landing pages, but the meaning shifts depending on who's selling.

We’re here to strip that back.

In this article, we’ll cover what the technology actually does at an architectural level. This includes composite AI, retrieval-augmented generation, and the shift toward agentic execution. Then, we’ll teach you how to evaluate platforms based on what they deliver, not what they claim.

If you work in organizations handling 10,000+ monthly call minutes, you need specifics, not slogans, and we’ll get right into that.

Let’s start.

What Is Enterprise Conversational AI?

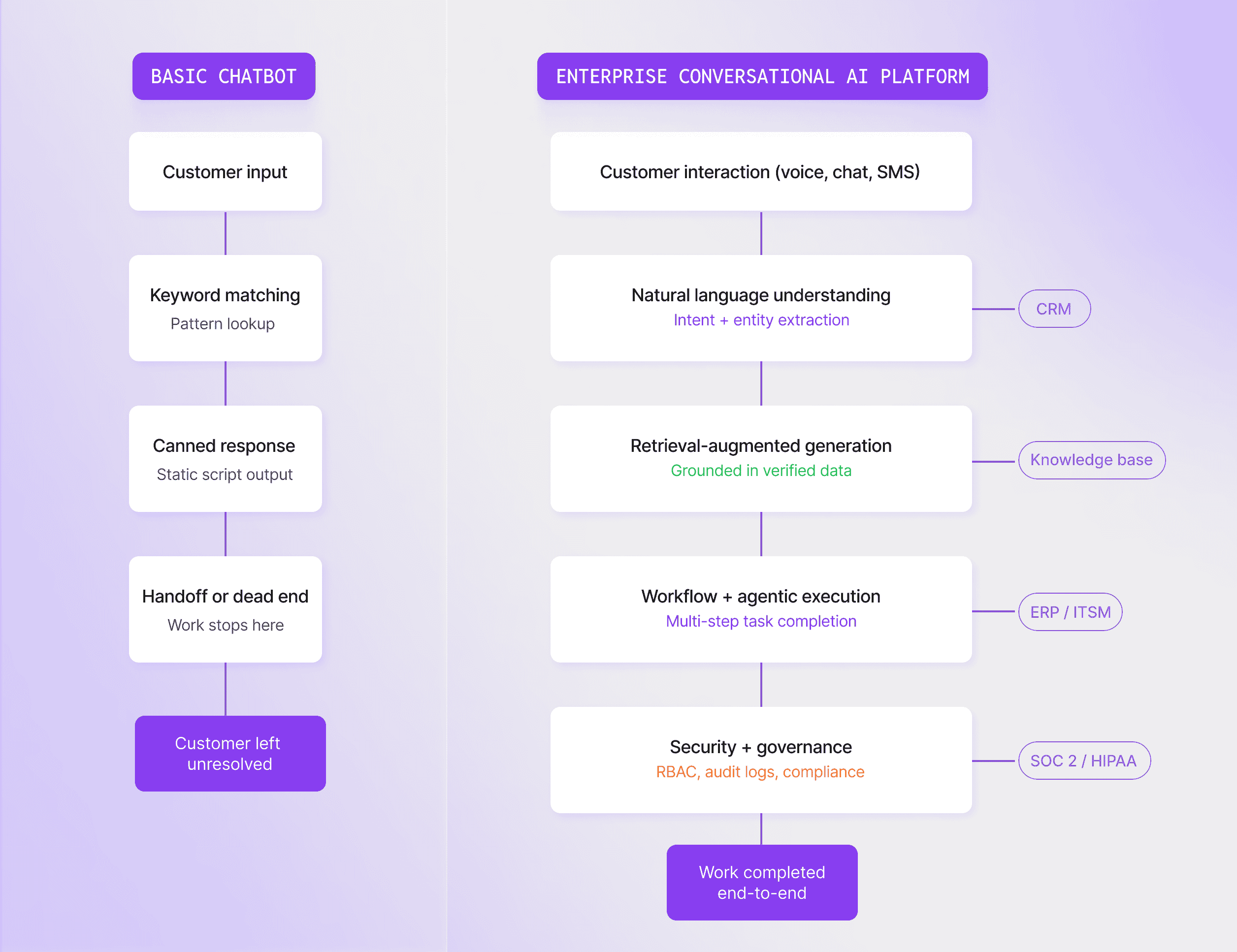

Enterprise conversational AI refers to platforms that combine multiple AI technologies – large language models, natural language understanding, retrieval-augmented generation (RAG), and behavioral guardrails – to automate complex business conversations across voice, chat, SMS, and messaging at an organizational scale.

As you can see, this is a lot more complicated than a chatbot answering FAQs from a static script, and for a good reason. Consumer tools and conversational AIs like ChatGPT lack the integration layers, access controls, audit trails, and telephony infrastructure required for enterprise deployment. You can't plug ChatGPT into your contact center and expect it to enforce HIPAA, route sensitive calls, or hand off to a human agent with full context.

Enterprise platforms connect directly to CRMs, ITSM tools, and HRIS systems to execute multi-step workflows, enforce compliance policies, and maintain governance across thousands of daily interactions.

Here's an example: A customer calls about a billing dispute. A “normal chatbot” will just send over the relevant help article. An enterprise AI agent pulls the account history, checks the refund policy, processes the correction, and updates the CRM. That's the difference between responding and actually completing the work.

The category has moved through three distinct phases:

- Rule-based scripts: Keyword matching to canned responses.

- LLM-augmented systems: Natural language understanding with retrieval grounding.

- Agentic AI: The emerging model where AI doesn't just understand and respond, but executes goals autonomously (more on this in the workflow automation section below).

The market reflects this momentum. The Business Research Company valued the enterprise conversational AI platform market at $11.61 billion in 2025, with projections reaching $49.53 billion by 2030 at a 33.6% CAGR.

Benefits of Enterprise Conversational AI

Generic benefit claims are everywhere in this space – "reduce costs," "improve CX," "scale faster."

That's not useful when you're building a business case, so here are some verified outcomes worth referencing:

However, as those solutions became more common, the ROI bar moved. A Futurum Group survey of 830 IT decision-makers found that productivity gains dropped from 23.8% to 18.0% as the top success metric, while direct financial impact – revenue growth and profitability combined – nearly doubled to 21.7%. CFOs aren't asking "how many hours did we save?" anymore. They're asking "what did this do to the P&L?"

Key Capabilities of Enterprise Conversational AI

Enterprise platforms are always composite. No single AI component works in isolation, and their architecture typically stacks four layers:

- Natural language understanding (NLU): Classifies intent and extracts meaning.

- Retrieval-augmented generation (RAG): Grounds responses in verified company data.

- Workflow automation and agentic execution: Carries tasks through to completion.

- Security, compliance, and governance: Enforces policies at every step.

A single customer interaction can pass through all four in seconds, so let’s look at those in more detail.

Natural Language Understanding and Intent Recognition

NLU is the first layer – the one that determines whether everything downstream works or falls apart.

When a customer says, "I need to reset my VPN access," the NLU engine breaks that down into its components: Intent (access reset) and entity (VPN), and routes it to the correct automation workflow.

It doesn’t need to do keyword matching or use a static decision tree. The system understands what the person is trying to accomplish and acts on it.

Modern platforms layer LLMs on top of traditional NLU pipelines, letting them handle open-ended, unpredictable conversations with far less training data than older systems required. Still, the deterministic NLU pipeline hasn't disappeared entirely. For high-compliance, high-volume intent classification, predictability still matters more than flexibility. Most enterprise platforms run both in parallel.

Where this plays out:

- Customer support: FAQ resolution, troubleshooting, routing complex issues to human agents with full context passed along.

- IT helpdesk: Password resets, software provisioning, and access requests.

- HR: Benefits queries, onboarding workflows, policy questions.

For a deeper look at how these use cases work in production, Synthflow's contact center automation guide covers real-world deployment patterns.

Important: Accuracy degrades at volume without purpose-built infrastructure. An NLU engine that classifies intent correctly at 1,000 interactions a day might start breaking down at 100,000. So when you’re choosing your enterprise conversational AI, you need to think about scale. But more on that later!

RAG and Knowledge Grounding

Retrieval-augmented generation (RAG) grounds LLM responses in verified company data before generating output. This prevents hallucinations and gives you an AI agent that is actually right, instead of just sounding very confident, without anything to back it up.

How it works in practice:

- A customer or employee asks a question.

- The RAG pipeline retrieves relevant documents from approved knowledge bases, such as policy docs, product manuals, and CRM records.

- That context gets fed to the LLM, constraining its output to verified facts rather than probabilistic guessing.

Without RAG, you're relying on the LLM's training data, which can be outdated, incomplete, or just wrong. The result would be a fluent, confident-sounding response that is entirely incorrect, and that’s a problem.

A single hallucinated response in healthcare or financial services creates real liability. We're not talking about a minor inconvenience: We're talking about incorrect policy information, wrong dosage guidance, or fabricated account details delivered with complete confidence. Real, dangerous situations.

In enterprise deployments, RAG powers three main use cases: Search across internal documentation, real-time policy lookup during live customer interactions, and agent assist. The latter is where the system surfaces relevant knowledge to human agents mid-conversation so they don't have to dig for it themselves.

Workflow Automation and Agentic AI

First, we need to establish that basic automation executes predefined sequences. A password reset, for example, follows a fixed path: Verify identity → reset credentials → send confirmation. Every step is scripted in advance. If the situation doesn't match the script, the system stalls.

Agentic AI works differently. Instead of following a script, it receives a goal – "resolve this customer complaint" – and autonomously determines the steps:

- Retrieve account history.

- Check the refund policy.

- Process the correction.

- Update the CRM.

- Send a confirmation.

The path isn't predetermined, and it’s up to the agent to figure it out.

This type of workflow is already happening, and BCG documents real outcomes from agentic deployments across industries:

The same research shows that effective AI agents accelerate business processes by 30% to 50% overall. And the scale is expanding fast – Juniper Research projects that AI-agent-automated interactions will grow from 3.3 billion in 2025 to over 34 billion by 2027.

A big part of what's making this possible is the Model Context Protocol (MCP), an open standard that enables agents to connect to external tools and APIs in a standardized way.

But autonomy without governance is a liability.

When agents act on their own, controls become non-negotiable. BCG recommends explicit agent ownership (someone is accountable for every deployed agent), monetary thresholds for human approval (automatic refunds only up to a set limit), audit logging for every action taken, and tested rollback plans in case something goes wrong.

In practice, the highest-value agentic use cases right now are end-to-end contact center complaint resolution, insurance claims processing, and outbound sales qualification – scenarios where agents handle multi-turn conversations, make decisions, and book meetings without human intervention.

Security, Compliance, and Governance

Every vendor page mentions compliance. Very few explain what it actually requires.

If you're evaluating platforms for a regulated industry, here's what to look for.

Certifications to require

- SOC 2 Type II: The baseline for any enterprise SaaS.

- ISO 27001: Information security management, increasingly expected by EU buyers.

- HIPAA BAAs: Mandatory if you're handling protected health information.

- GDPR DPAs: Required for any EU data processing.

- PCI DSS: Required if payment data enters the conversation.

Governance tooling to verify

- Role-based access control (RBAC): So your marketing team can't edit a compliance-sensitive healthcare agent.

- Audit logging: HIPAA requires six-year retention; ask your vendor what their default is.

- Version control on agent flows: If you can't roll back a bad deployment, you don't have governance.

- Multi-environment deployment: Dev, staging, production. If the vendor can't demo this during evaluation, they don't have it.

⚠️The EU AI Act factor: Contact center AI that influences loan decisions or insurance claim outcomes can cross into "high-risk" classification under the EU AI Act, triggering mandatory conformity assessments. Few vendors have addressed this in detail publicly, so it's worth asking about directly.

“Meeting compliance requirements comes with real costs. Production AI agents handling sensitive data add meaningful spend in security tooling, audit preparation, and ongoing monitoring. Plan for this upfront rather than discovering it mid-deployment.”

— Hakob Astabatsyan, Co-founder and CEO, Synthflow

As one reference point, Synthflow holds SOC 2, HIPAA, PCI DSS, GDPR, and ISO 27001 certifications – an example of what full enterprise-grade coverage looks like in practice. If you want to see for yourself, our trust vault provides a list of the supporting documentation and public access for some of them.

Enterprise Conversational AI Platforms Compared

When you start comparing tools, you might ask yourself as a buyer, "Which platform is the leader?" However, that’s the wrong question.

The Gartner Critical Capabilities report, for example, evaluates vendors across distinct use-case scenarios because no single platform leads across all of them. The right question is: "Who fits my deployment context?"

This is why we've organized the sections below by category rather than ranking. Contact center specialists solve different problems than cloud ecosystem platforms, and treating them as interchangeable leads to mismatches that surface months into deployment.

Still, before getting into specific platforms, it's worth addressing the bigger strategic decision: Should you build, buy, or extend?

According to an analysis by CIO.com, the most successful organizations buy foundation models where the technology is commoditized, build custom workflows where differentiation matters, and extend their existing enterprise platforms.

With that framing in mind, here's how the major platform categories break down.

Contact Center and Voice Automation Platforms

These are platforms purpose-built for high-volume contact center automation and voice-led customer engagement. If your primary use case is handling thousands of inbound interactions daily, this is the category to evaluate first.

Cognigy (now NiCE Cognigy)

Named a 2025 Gartner Leader for Conversational AI, Cognigy was purpose-built for contact center AI with dedicated integrations for Genesys, Avaya, and NICE. In July 2025, it was acquired by the latter for $955M.

Features and integrations:

- 100+ languages with advanced multilingual NLU.

- Visual low-code conversation builder with LLM orchestration.

- SaaS and on-prem deployment (one of the few offering both).

- Agent Copilot for real-time human agent assist.

Overview:

🤔Want a deeper look at Cognigy? Take a look at our detailed Cognigy AI review for in-depth feature analysis and pricing information.

Google Cloud Customer Engagement Suite

Rather than plugging into your contact center, Google Cloud Customer Engagement Suite is the contact center – an end-to-end suite built on Gemini models, positioned furthest in vision in the 2025 Gartner MQ.

Features and integrations:

- Conversational agents, agent assist, conversational insights, and Google CCaaS in one application.

- 100+ languages and dialects with native multilingual support.

- Real-time sentiment analytics across all touchpoints.

- Pre-built agents for retail, restaurants, and vertical-specific use cases.

Overview:

boost.ai

Boost.ai is a regulated-industry specialist and was built specifically for environments where accuracy and auditability outweigh everything else.

Features and integrations:

- Hybrid NLU combining symbolic and neural processing for high-accuracy intent classification.

- Native integrations with Genesys Cloud CX, Salesforce, Zendesk, and Microsoft Teams.

- No-code conversation builder with generative AI layered on top.

- Built-in live chat (Boost Human Chat) for agent handoff.

Overview:

Synthflow

Synthflow is an AI-native platform with owned telephony infrastructure, built from the ground up on LLMs rather than retrofitted from legacy IVR or NLP systems.

Features and integrations:

- Proprietary telephony stack with sub-100ms SBC-level processing (no Twilio dependency).

- Visual Flow Designer for multi-prompt conversation flows with conditional logic and guardrails.

- Deploys across voice, chat, SMS, and WhatsApp from a single platform.

- 200+ integrations, including Salesforce, HubSpot, Freshworks, and native CCaaS connectors (Cisco, Five9, Avaya, Genesys, NICE, RingCentral).

- Full white-label capability for embedding branded AI agents into existing products.

Overview:

For a direct side-by-side across all five vendor categories, Synthflow's enterprise comparison covers pricing, deployment timelines, and integrations.

Cloud Provider and Ecosystem Platforms

These platforms live inside broader cloud ecosystems. The trade-off is straightforward: You get speed and native integrations within your existing stack, but you'll hit limits when complex, multi-channel contact center needs arrive.

Microsoft Copilot Studio

Microsoft Copilot Studio is a low-code agent builder designed to extend the Microsoft ecosystem into conversational AI.

- Native integrations with M365, Teams, SharePoint, and Dynamics 365.

- WhatsApp channel support and MCP server connections.

- Agent builder using natural language prompts – no deep technical expertise required.

- Included with Microsoft 365 Copilot licenses; pay-as-you-go available for standalone.

Best for: Organizations already on Microsoft 365 that need internal employee productivity agents. Voice and contact center capabilities lag dedicated platforms.

IBM watsonx Orchestrate

IBM's enterprise AI agent platform is now centered on watsonx Orchestrate as the unified orchestration layer for AI agents and assistants across business functions.

- On-prem and hybrid cloud deployment for data residency and compliance.

- Prebuilt AI agents for HR, sales, procurement, finance, and customer service (watsonx Agents).

- Integrates with 80+ enterprise applications, including Salesforce, SAP, and Workday.

- Built on Granite LLMs with IP indemnity and explainability features.

Best for: Regulated industries (healthcare, financial services, government) that need on-prem deployment options and deep compliance controls.

Amazon Lex

AWS-native conversational AI service powered by the same technology as Alexa.

- Deep integration with Amazon Connect, Lambda, DynamoDB, and the broader AWS ecosystem.

- Natural language bot builder with prompt-based creation.

- Pay-as-you-go pricing with no minimum contracts.

- Multi-region deployment for global operations.

Best for: Engineering-led teams with existing AWS commitments who want full infrastructure control. Less intuitive than dedicated conversational AI platforms for non-technical users.

Kore.ai

Kore.ai is another 2025 Gartner Leader with the broadest scope across both customer-facing and internal enterprise workflows.

- Multi-agent orchestration for cross-departmental automation (IT, HR, finance, customer service).

- 250+ pre-built agent and tool templates across banking, healthcare, retail, and more.

- Strategic partnerships with both Microsoft (Azure, Teams, Copilot Studio) and AWS (Bedrock, Connect).

- Customers include Deutsche Bank, Pfizer, Morgan Stanley, and AMD.

Best for: Organizations that need a single platform spanning customer service and internal workflows (IT helpdesk, HR, procurement) with multi-agent orchestration across departments.

🤔Does the tool sound good, but doesn’t quite do it for you? Check out our detailed guide on Kore.ai alternatives!

In our experience, the "extend" path – building conversational AI on top of your existing cloud ecosystem – offers the fastest time-to-value within stacks you already run. But organizations with complex multi-channel contact center requirements, particularly voice-heavy operations, will reach their limits faster than with a purpose-built platform.

How to Evaluate Enterprise Conversational AI Platforms

Gartner Peer Insights publishes mandatory feature requirements for the conversational AI platforms category, but the taxonomy is buried behind a directory listing that most buyers never dig into. We'll make it usable here.

For a more detailed walkthrough focused on voice AI specifically, Synthflow's 2026 enterprise buying guide covers pricing transparency, latency benchmarks, orchestration capabilities, and total cost of ownership.

Enterprise-Grade Features and Integration Depth

"Enterprise-grade" gets used loosely. Here's what it should actually mean when you're sitting across from a vendor during evaluation.

- Multilingual NLP: Verify the platform handles your target languages natively, not through post-hoc translation layers that degrade accuracy. There's a big difference between "we support 30 languages" and "our NLU classifies intent accurately in 30 languages."

- RAG support: Confirm the platform connects to your knowledge bases and grounds responses in verified data. Ask specifically how it handles conflicting information across sources – that's where most implementations break down.

- Integration depth: Native CRM/ITSM/HRIS connectors vs. webhook-only. Gartner Peer Insights lists prebuilt enterprise system connectors as a mandatory feature for the category. If the vendor only offers generic webhooks, you'll be building and maintaining those integrations yourself.

- Deployment model: Cloud-native (most platforms), on-prem (Cognigy, IBM), or hybrid. Match to your data residency and regulatory requirements – don't let a vendor talk you into a deployment model that doesn't fit your compliance posture.

- Governance tooling: RBAC, audit logging, version control on agent flows, multi-environment deployment (dev/staging/production).

Scaling Challenges and Measuring ROI

The single biggest shift in how enterprises evaluate conversational AI ROI happened in the last year. As we mentioned earlier, productivity gave its spot as the top success metric to direct P&L impact – revenue growth and profitability combined. In plain terms, "we saved 200 agent hours" doesn't cut it anymore. "We reduced cost-per-resolution by 40%" does.

The problem is that most organizations try to prove ROI by going broad. The better option is to start narrow, prove the math, then expand. The highest-ROI starting points share three traits: high volume, repeatability, and ease of measurement against a known baseline. Think tier-1 support, appointment scheduling, or password resets. These give you clean before-and-after numbers your CFO can actually work with.

Still, scaling from there introduces its own challenges.

- Governance infrastructure becomes more complex as you move into agentic deployments (covered in the workflow automation section above).

- Data quality across integrated systems degrades as you connect more sources.

- Accuracy maintenance gets harder as use cases multiply – what worked at three workflows may not hold at thirty without dedicated monitoring.

"The enterprises that get ROI fastest aren't the ones deploying the most sophisticated AI. They're the ones that pick a single, high-volume workflow – inbound support, appointment scheduling, lead qualification – prove the economics in 60 days, and then expand. The technology is ready. The bottleneck is almost always scope discipline."

— Hakob Astabatsyan, Co-founder and CEO, Synthflow

Choosing the Right Enterprise Conversational AI Platform

The frameworks, platform categories, and ROI methodology we've covered in this article apply regardless of which vendor you evaluate. The specifics of your deployment context – call volume, channels, regulatory environment, existing infrastructure – will narrow the field faster than any ranking or analyst quadrant.

But there's one more question worth asking: Does the AI actually complete the work, or does it just close the ticket?

Most platforms are optimized for the front end of an interaction, such as understanding the question, generating a response, and logging the conversation. But the moment complexity or something unexpected enters the picture (a refund that needs processing, an appointment that needs rescheduling across systems, a claim that requires verification and approval), the AI steps aside and a human picks up the rest. The ticket shows as "resolved" but the work isn't actually done.

That gap between closing a ticket and completing the task is where real value gets lost. It's also where the market is heading. The next generation of enterprise AI won't be priced per minute or per interaction – it will be priced per outcome, because the AI will carry the work through from start to finish.

Synthflow is building toward exactly that. Not just AI that talks, but AI that executes end-to-end – across voice, chat, and SMS – so the outcome itself is the deliverable.

For readers who want a direct side-by-side of pricing, deployment timelines, compliance, and integrations across Cognigy, Kore.ai, Parloa, Sierra, and PolyAI, Synthflow's enterprise comparison provides a useful starting point.

And for organizations evaluating enterprise-grade AI agents across voice, chat, and SMS, Synthflow's tailored demo is built for companies handling 10,000+ call minutes monthly, with forward-deployed engineers who guide the process from pilot to production. Talk to our team to find out how Synthflow can help you implement conversational AI at an enterprise level.