HIPAA compliant AI agents do exist. But "HIPAA compliant" is a self-attested label – there's no government certification body, no official registry, and no seal a vendor has to earn. So, if any company can put the phrase on their website, how can you tell which are legitimate?

It’s relatively simple: You just need to ensure that the vendor's technical and legal controls meet the requirements of the HIPAA Security Rule. This includes signed Business Associate Agreements, end-to-end encryption, structured audit trails, access controls, and verifiable third-party certifications.

If you’re in a healthcare organization, looking to deploy AI agents for scheduling, patient intake, call routing, and after-hours coverage, then you need a solid compliance framework. Something you can actually take into vendor meetings and choose a system you can trust.

In this article, we'll cover the five technical and legal layers that separate genuine compliance from marketing, why voice AI agents carry a higher compliance bar than text-based tools, and how to evaluate vendors against each requirement before any patient data goes near the system.

For a broader look at how AI is being applied across healthcare workflows, see our guide to conversational AI in healthcare.

Why HIPAA Compliance Matters More For AI Agents

Traditional healthcare software, such as your EHR, your scheduling platform, and your legacy call routing system, stores and retrieves data from predefined fields. It's structured, bounded, and relatively predictable from a compliance standpoint.

AI agents are different. And the difference matters for three specific reasons.

- AI agents process protected health information (PHI) dynamically. They don't just retrieve a record – they interpret, synthesize, and act on information in real time. That creates compliance exposure at every step of the conversation, not just at the point of storage.

- AI models can be trained on or retain patient data without the organization's knowledge. Consumer-grade tools are the most obvious example: Staff paste patient information into free ChatGPT, Claude, or Gemini to summarize notes or draft communications – and those inputs are retained for model training by default. According to the 2025 symplr Compass Survey, 86% of healthcare IT executives report shadow IT in their health systems, up from 74% three years ago.

- AI agents synthesize information across source boundaries in ways that break traditional access controls. Role-based access control works cleanly in conventional software. An AI agent querying multiple connected systems can surface information that a user's role doesn't cover – because the synthesis step doesn't respect the same boundaries.

⚠️Important: Standard ChatGPT is not HIPAA compliant. OpenAI's BAA-eligible enterprise API is a separate product entirely, requiring specific configuration and a signed Business Associate Agreement before it can legally touch PHI. That distinction is frequently missed, and the consequences aren't minor – HIPAA violation penalties for willful neglect can reach $2.19 million if not corrected within 30 days.

Each of the reasons above creates a compliance exposure that static EHR systems and legacy call routing simply don't have.

The Financial And Regulatory Case For Getting This Right

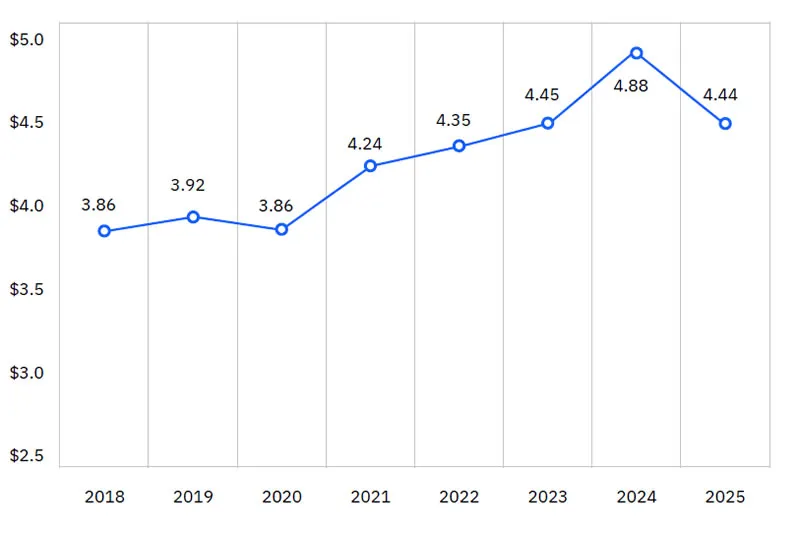

The cost of getting it wrong is concrete. Healthcare data breaches average $7.42 million per incident – the highest average breach cost of any industry for the 14th consecutive year, according to IBM's 2025 Cost of a Data Breach Report. That's nearly double the cross-industry average, which is $4.44 million.

The regulatory picture is also shifting. On December 27, 2024, HHS published a Notice of Proposed Rulemaking proposing the most significant update to the HIPAA Security Rule in two decades. Among the proposed changes: Making AES-256 encryption and multi-factor authentication mandatory rather than "addressable," and shortening breach notification timelines from 60 to 30 days. AI agents deployed today need to be built against where the rules are heading, not just what's technically required at this moment.

Voice AI agents sit at the top of the compliance risk curve among administrative use cases. The moment a patient self-identifies on a call, that audio stream becomes real-time ePHI – requiring encryption during the live stream, structured logging of agent decisions mid-call, and recording controls that comply with HIPAA's minimum necessary standard.

What Makes An AI Agent HIPAA Compliant?

Most vendor compliance claims look identical from the outside – a badge, a mention of SOC 2, a note that they "take privacy seriously." The only way to tell the difference is to go layer by layer through what the system actually does.

Here's what to verify at each one.

Layer 1: BAAs Built For AI

A signed Business Associate Agreement (BAA) is the legal baseline, but standard templates were written before AI was a deployment consideration. They don't cover model training on PHI, subcontractor coverage for the cloud provider hosting the AI, or what happens to patient data after the contract ends. Privacy attorneys are increasingly finding AI systems collecting PHI in ways that exceed original BAA terms.

Provisions to verify: Explicit prohibition on PHI for model training, downstream business associate coverage, post-termination data destruction timelines, and strict data minimization requirements.

Layer 2: Encryption And Zero-Data Retention

The technical floor is AES-256 at rest and TLS 1.2+ in transit. The December 2024 HHS NPRM proposes making these mandatory rather than "addressable," so vendors treating them as optional are already behind.

Zero-data retention goes further: PHI exists only in memory during the active session, is never written to persistent storage, never enters training pipelines, and is purged at session end.

Here’s what to ask vendors directly:

- Dedicated or shared LLM instance?

- Session data written to any persistent cache?

- Does the cloud provider's DPA confirm no retention?

Layer 3: Audit Trails That Hold Up Under OCR Investigation

The HIPAA Security Rule requires procedures to review records tracking ePHI access and detect security incidents. In practice: Every interaction logged with actor, action, patient identifier, timestamp, and success/failure – stored in write-once systems that can't be altered. Without immutable logs, compliance can't be demonstrated when it actually matters.

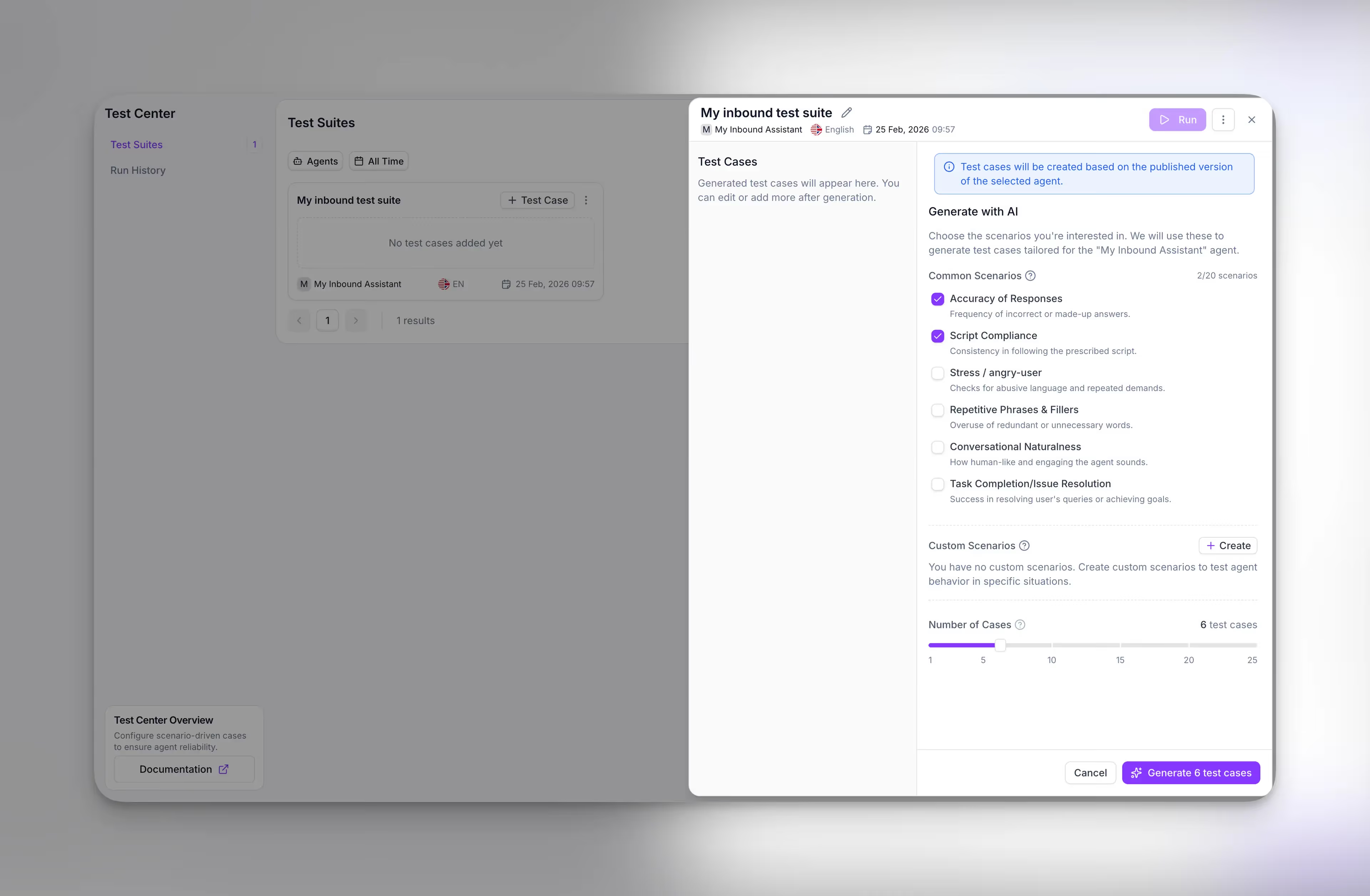

Synthflow's Command Center logs text, audio, and API interactions with collaborative annotations, and a recording opt-out toggle gives compliance teams granular control over which calls are captured – addressing HIPAA's minimum necessary standard at the infrastructure level.

Layer 4: AI-Specific Access Controls

Traditional role-based access control works cleanly in conventional software: Role X can see data set Y, nothing more. AI agents don't work that way. When an agent pulls from multiple connected systems to answer a question, it can surface information a user was never supposed to see – not because of a misconfiguration, but because the synthesis step doesn't respect the same boundaries the rest of the system does.

A simple example: A front-desk coordinator asks the AI to pull up a patient's upcoming appointments. The agent, connected to both the scheduling system and the clinical notes, returns the appointment – along with medication details from a system the coordinator doesn't have direct access to.

That kind of exposure gets worse when the agent can be manipulated through conversation. A carefully constructed two-step prompt can extract PHI that a standard security scanner would never flag on its own. The mitigation is enforcing access controls at the AI layer itself – before the agent queries any data source – and building agents that follow defined workflows and query only approved knowledge sources. This is the practical distinction between agentic and generative AI in a healthcare context: agentic systems are built to complete work within guardrails, not generate freeform responses.

Synthflow agents operate within that kind of defined logic, with adversarial prompt testing during a 4–8 week enterprise onboarding before any patient call goes live.

Layer 5: Shared Responsibility.

Buying a compliant platform doesn't make your organization compliant. The vendor owns platform security – encryption, infrastructure, and breach notification. You own configuration, access management, offboarding, and ongoing risk assessment, and the HHS Cloud Computing Guidance confirms these obligations are non-delegable. If a nurse leaves and her AI credentials stay active, that's your breach, not the vendor's.

Synthflow's US and EU regional data tenants mean PHI stays in its originating region – an infrastructure decision, not a feature toggle. Patient data never routes through third-party middleware.

Bonus Layer: Third-Party Certification

Self-attestation isn't enough because any vendor can claim HIPAA compliance. That’s why you need independent auditing.

- SOC 2 Type I assesses whether security controls are designed correctly at a single point in time.

- SOC 2 Type II goes further, assessing whether those controls operated effectively over 6–12 months. For healthcare procurement, Type II is the meaningful benchmark.

- HITRUST CSF is the gold standard in healthcare, specifically, incorporating HIPAA, NIST, and ISO 27001 requirements into a single audited framework. It takes longer and costs more to achieve, which is exactly why it carries weight.

Synthflow holds SOC 2, HIPAA, PCI DSS Level 1, ISO 27001, and GDPR certifications, with end-to-end encryption, audit logs, and RBAC across the platform. For a deeper look at how ISO 27001 closes the governance gap for agentic AI at enterprise scale, see Scaling agentic AI: How ISO 27001 closes the governance gap.

"The compliance question most healthcare IT teams get wrong is treating it as a vendor problem. Your AI vendor secures the platform – but who revokes access when an employee leaves? Who decides which calls get recorded? Who audits the AI's data access patterns quarterly? Those are your responsibilities, and no BAA in the world transfers them. The organizations that deploy AI successfully are the ones that build compliance into their operating procedures from day one, not the ones that buy the most expensive tool and assume they're covered."

– Eyal, Director of Professional Services, Synthflow

HIPAA-Compliant AI Agents By Category

Not all AI agents carry the same compliance requirements. The right platform depends on what your agents are actually doing with patient data – and where the PHI exposure lives.

Voice AI Agents: Scheduling, Intake, After-hours, And Call Routing

This category carries the highest compliance bar. Real-time audio becomes ePHI the moment a patient self-identifies, which means you need encryption during the live stream, structured call logging, recording controls, and owned infrastructure so PHI never routes through third-party middleware.

Synthflow

Synthflow is voice-first, LLM-native, and agentic from the ground up. We’re certified across SOC 2, HIPAA, PCI DSS Level 1, ISO 27001, and GDPR – with end-to-end encryption, audit logs, and RBAC.

- At enterprise scale, the Freshworks partnership automated 65% of routine voice requests with a 75% reduction in wait times across a 5,000–10,000 employee global deployment.

- In healthcare specifically, Medbelle saw +60% scheduling efficiency, -30% no-show rates, and 2.5x qualified appointments.

Synthflow owns its telephony infrastructure and operates regional data tenants in the US and EU, so patient data never routes through third-party middleware. Agents operate within defined logic with adversarial prompt testing during enterprise onboarding.

Hyro

Hyro is trusted by 45+ health systems, including Intermountain and Baptist Health, with HIPAA-compliant AI agents focused on patient access workflows. Automates 85%+ of routine patient calls and offers Epic EHR integration.

ElevenLabs

ElevenLabs offers a HIPAA-compliant medical answering service with 24/7 AI-powered phone agents, strong voice fidelity, expressive multilingual voices, data encryption, and team permissions. Better suited for scenarios where voice naturalness is the primary requirement over end-to-end workflow completion.

Clinical Documentation And Ambient AI

Unlike conversational voice agents, ambient documentation AI works in the background – listening passively during a patient encounter and generating a structured clinical note afterward.

The compliance profile is different, too. The focus shifts from real-time audio encryption to specialty accuracy, EHR workflow integration, and ensuring a physician reviews and signs off before anything enters the record.

Here are the leaders in this space:

- Microsoft Dragon Copilot is the market leader, used across large health systems for encounter documentation that feeds directly into Epic, Cerner, and other major EHRs — all within Microsoft's Cloud for Healthcare compliance framework.

- Abridge earned the top spot in the Ambient AI category in Best in KLAS 2026 and is deployed at health systems including Johns Hopkins and Mayo Clinic. Its contextual reasoning engine is built specifically for clinical nuance – distinguishing between a resolved condition and one being actively managed, for example – which has direct implications for coding accuracy.

- Suki extends beyond documentation into coding suggestions and pre-charting across 100+ specialties. Users report a 60% reduction in burnout and 41% less time spent on notes.

Enterprise Workflow Automation

Revenue cycle, prior authorization, coding, and intake forms involve structured data moving across multiple connected systems – EHRs, CRMs, billing platforms, payers.

The compliance priority here is making sure access controls travel with the data as it crosses those system boundaries, and that the minimum necessary standard is enforced at every step.

- StackAI targets this layer directly. Its platform handles prior authorization workflows, claims processing, denial prevention, and compliance audit readiness for payers and providers. One health system deployment logged over 2.5 million AI agent actions and 475,000 hours saved. HIPAA compliant with SOC 2 Type II and ISO 27001 certification.

- Keragon is the HIPAA-compliant workflow automation layer for smaller and mid-market healthcare organizations that need to connect systems without building custom integrations. It offers signed BAAs on all paid plans, end-to-end encryption, zero data retention for AI processing, and 300+ native healthcare integrations, including Athenahealth, Elation, and Healthie. Trusted by 500+ healthcare companies.

A Note On The "Big Four" AI Agents

When people ask which of the big four AI agents – OpenAI, Google, Microsoft, Anthropic – is HIPAA compliant, they're asking the wrong question. Those are foundation model providers, not healthcare agents. Compliance is built at the application layer by the teams deploying those models. The category of agent matters more than what's running underneath it.

For guidance on evaluating enterprise voice AI vendors across these criteria, see our 2026 enterprise AI buying guide.

HIPAA compliance checklist for AI vendor evaluation

That's a lot of ground to cover. Here's the short version – every question you should be asking a vendor before PHI goes anywhere near their system.

Business Associate Agreement

- BAA explicitly covers AI-specific data flows, not just traditional SaaS storage

- Prohibition on using PHI for model training is written into the agreement

- Downstream business associate coverage includes the cloud provider hosting the AI

- Post-termination data destruction timelines are defined

- Data minimization requirements are specified

Encryption and data retention

- AES-256 encryption at rest and TLS 1.2+ in transit – minimum, not optional

- Dedicated LLM instance, not shared infrastructure processing PHI alongside other tenants

- Zero-data retention confirmed – session data purged at session end, never written to persistent storage

- Cloud provider's DPA explicitly confirms no retention of PHI

- No session data written to any persistent cache

Audit trails

- Every interaction logged with actor, action, patient identifier, timestamp, and success/failure

- Logs stored in write-once, immutable systems that can't be altered after the fact

- Recording opt-out toggle available for granular control over which calls are captured

- Audit trail meets OCR investigation standards – not just internal reporting

Access controls

- Access controls enforced at the AI layer, not just the application layer

- Agents query only approved knowledge sources through defined workflows

- Cross-system synthesis restricted so the AI can't surface information outside a user's role boundaries

- Adversarial prompt testing completed before any patient call goes live

- Prompt injection safeguards documented and tested

Shared responsibility

- Clear documentation of what the vendor owns vs. what your organization owns

- Your team has a process for credential revocation when staff leave

- Ongoing risk assessment schedule defined, not just at implementation

- Regional data residency confirmed – PHI stays in its originating region, not routed through third-party middleware

Third-party certification

- SOC 2 Type II report available, not just Type I

- Ask for the observation period – anything under 6 months is worth questioning

- HITRUST CSF certification if you need the gold standard for healthcare-specific compliance

- Check certification dates – a 2-year-old SOC 2 report may not reflect the current system

Any vendor who's actually built compliance into their infrastructure should be able to answer every one of these on a first call. If they can't – or if the answers are vague – that's your signal.

Evaluating HIPAA Compliant AI Agents For Your Organization

The five-layer framework above – BAA, encryption, zero-data retention, audit trails, access controls, and shared responsibility – is also your evaluation checklist. Walk any vendor through those five layers, and you'll know quickly whether their compliance is architectural or cosmetic.

Synthflow was built against this framework. Medbelle saw +60% scheduling efficiency and -30% no-show rates. At enterprise scale, the Freshworks partnership automated 65% of routine calls, with staff freed to focus on complex patient care instead of phone queues. Certified across SOC 2, HIPAA, PCI DSS Level 1, ISO 27001, and GDPR – with owned telephony infrastructure and regional data tenants in the US and EU.

Talk to Synthflow's team about what compliant voice AI looks like in production for your organization – from first conversation to measurable outcomes in weeks.